AI

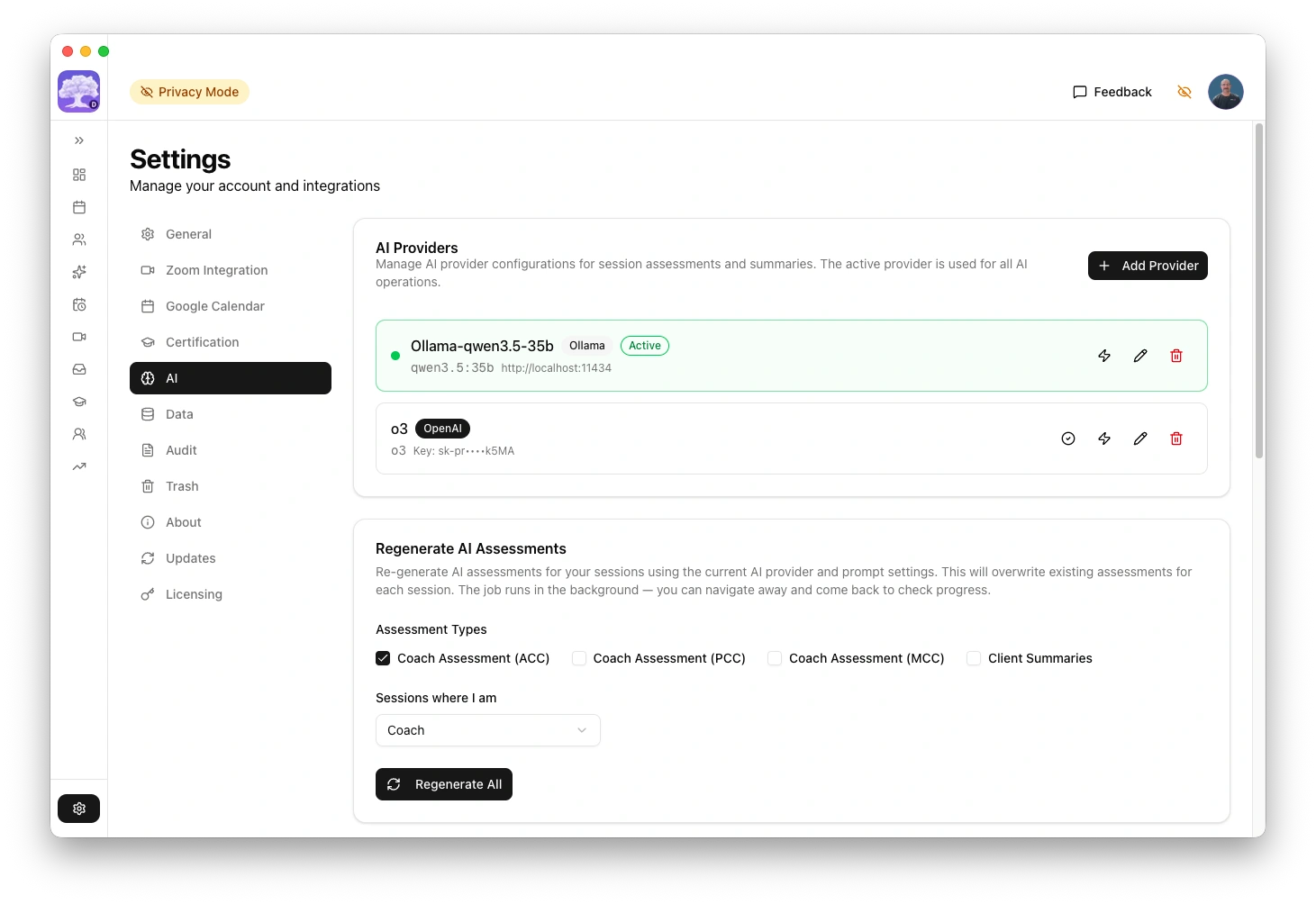

The platform has pre-configured AI providers that are used to perform a number of tasks, such as analysing your coaching transcripts and generate ICF competency assessments.

For local providers you have installed such as Ollama and LM Studio you may need to change the local port to match the one they are running on and then select the model you have downloaded and installed through them. No API keys are required for local providers.

Cloud providers require an API key in order to select a model and use it. Each provider in the table below is linked to their setup page with instructions on how to create the key, if you do not have one already. All API usage costs for cloud providers are your responsibility.

The platform is designed so that when an existing provider adds a new model you can select it as soon as it is available for use.

AI providers

| Provider | Type | Cost | Privacy | Recommended for assessment |

|---|---|---|---|---|

| Ollama | Local | Free | Nothing leaves your computer | Yes (qwen3 larger models) |

| LM Studio | Local | Free | Nothing leaves your computer | Use with caution |

| llama-server | Local (Advanced) | Free | Nothing leaves your computer | Yes (with reasoning budget) |

| OpenAI | Cloud API | Paid credits up front | Transcripts sent to OpenAI | Yes (o3-mini, o3) |

| Anthropic | Cloud API | Paid credits up front | API data not used for training | Yes (Claude Sonnet) |

| xAI (Grok) | Cloud API | Paid credits up front | Transcripts sent to xAI | Yes (grok reasoning) |

| Perplexity | Cloud API | Subscription | Transcripts sent to Perplexity | Use with caution |

| Mistral | Cloud API | Pay-as-you-go | Data processed in EU | Untested |

| Groq | Cloud API | Free tier available | Transcripts sent to Groq | Untested |

| Google Gemini | Cloud API | Paid usage at the end of each month | Transcripts sent to Google | Not recommended |

All providers produce ICF competency assessments with specific transcript evidence. The quality depends on which model you choose. See Choosing a model for recommendations and test results. For rate limits, context windows, and output token budgets across all cloud providers, see API limits.

With Ollama or LM Studio, nothing leaves your computer. With cloud providers, transcripts are sent to their servers for processing. All produce the same type of ICF assessment. Choose based on your privacy requirements and assessment quality needs.

Advanced tuning

Each provider supports optional advanced tuning.

| Tuning | Description |

|---|---|

| Max output tokens | Increase if assessments are being cut short |

| Temperature | Lower values produce more consistent results |

| Timeout | Increase for slower models or long transcripts |

| Context limit | Override the automatic context window detection |

| Enable thinking | For llama-server with --reasoning-budget. Disabled by default for Ollama and LM Studio. See AI analysis modes for details |

| System memory | Override memory detection for Ollama and LM Studio context window sizing |

Most users do not need to change these settings. The defaults work well for typical coaching sessions.

Troubleshooting

AI assessment not generating

- Check that a provider is configured and marked as active

- For cloud providers: verify your API key is correct and has available credits

- For Ollama: verify Ollama is running (look for the icon in your menu bar)

- For LM Studio: verify LM Studio is running with a model loaded and the local server started

Assessment seems shallow or generic

The model you choose has a significant impact on quality. General-purpose chat models tend to produce inflated scores with vague evidence. See Choosing a model for recommendations.

Transcript too long

On systems with 16 GB RAM, local models may not have enough context for long transcripts. Consider using a cloud provider for longer sessions, or increase the context limit in Advanced tuning.

Timeout errors

Some models take longer for complex assessments (PCC/MCC with 39 markers). Increase the timeout in advanced tuning for the provider. Claude Sonnet typically needs ~4 minutes for full assessments.